One promising application of robot-human interaction is on the manufacturing floor. We’ve seen this play out most prominently in Pittsburgh from the Advanced Robotics Manufacturing Institute, which recently scored Build Back Better Regional Challenge funding to create a “de-risking” center for small manufacturers that allows them to try out robotics solutions, among other programs.

CMU researchers are thinking about the challenge, too. The first of many steps to get to collaborative work could include an industrial robot strong enough to lift engine blocks, and smart enough to predict its human partner’s actions. To get there, following three years of work this past October, the Robotics Institute demonstrated for Ford Motor Company technology that could enable robots to partner with humans during manufacturing.

Ford has supported the team responsible for the tech’s development through its Ford University Research Program. The team includes Ruixuan Liu, a Ph.D. student in robotics who said in a statement that the goal is for robots to “help humans do tasks more more efficiently.” That’s where the tracking and prediction of actions comes in.

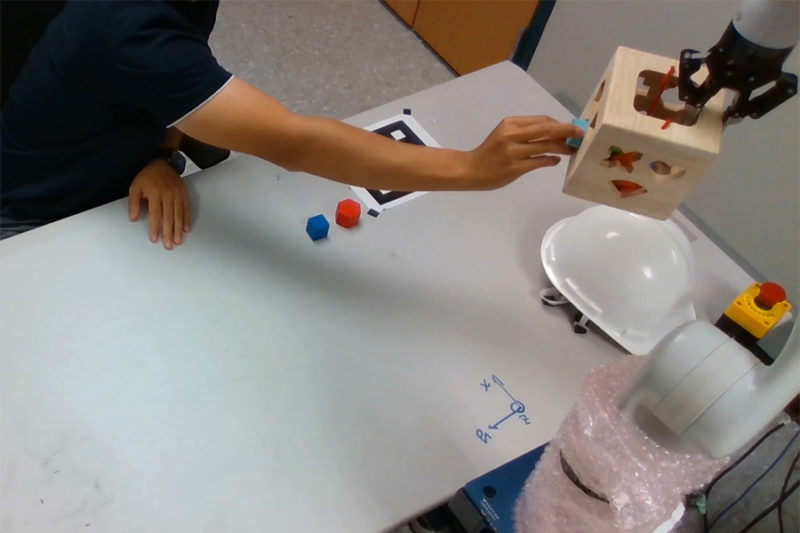

According to a CMU press release, at the demonstration, Ford researchers witnessed the team use a wooden box that was attached to a robotic arm to get an idea of how such a system could work down the line. A human inserted differently shaped blocks into holes on the box, while the robot was tasked with rotating the box so that the human could choose the right hole.

Watch videos of the demo here.

“The conventional approach is to first see which block the human picks,” said Changliu Liu, the assistant professor in the Robotics Institute who led the project. “But that’s a slow approach. What we do is actually predict what the human wants so the robot can work more proactively.”

Per CMU, the robot successfully assisted with the task during the demonstration regardless of which block the human chose or how the blocks were arranged, and adjusted according to the behavior of the different human partners participating. Why does that matter? Expand this exercise out to include potentially dangerous materials on a manufacturing floor. Different people behave differently, and safety of the human workers can be more assured if a robot acts responsively, instead of prescriptively.

The Robotics Institute team aims to continue and expand the research to multiple robots and workers.

“This is a very different way of doing things,” said Greg Linkowski, a Ford robotics research engineer who observed the demo. “It was an interesting proof of concept.”

Atiya Irvin-Mitchell is a 2022-2024 corps member for Report for America, an initiative of The Groundtruth Project that pairs young journalists with local newsrooms. This position is supported by the Heinz Endowments.

This editorial article is a part of Technology of the Future Month 2022 in Technical.ly's editorial calendar. This month’s theme is underwritten by Verizon 5G. This story was independently reported and not reviewed by Verizon 5G before publication.

Before you go...

Please consider supporting Technical.ly to keep our independent journalism strong. Unlike most business-focused media outlets, we don’t have a paywall. Instead, we count on your personal and organizational support.

Join our growing Slack community

Join 5,000 tech professionals and entrepreneurs in our community Slack today!

The person charged in the UnitedHealthcare CEO shooting had a ton of tech connections

The looming TikTok ban doesn’t strike financial fear into the hearts of creators — it’s community they’re worried about

Where are the country’s most vibrant tech and startup communities?