For Technical.ly’s 10-year anniversary, we’re diving deep into the archives for nostalgic, funny or noteworthy updates. This is part of a year-long series.

Over the years, we’ve covered OpenDataPhilly more than a few times.

From the project’s work compiling climate change data before Trump’s inauguration to sharing how to get new datasets of public interest featured, our history goes back to, well, the beginning of OpenDataPhilly: Mayor Michael Nutter actually signed the Open Data Executive Order during the second Philly Tech Week in April 2012.

One year later — at a time when open data as a concept was still “fairly unknown” to the public, said the Office of Innovation and Technology’s current data services manager, Kistine Carolan — OpenDataPhilly had released 46 datasets, including crime data and property assessments.

For the anniversary in May 2013, Technical.ly rounded up 10 original goals and deadlines to judge if they were met. With two NOs and two MAYBEs, we’ll say OpenDataPhilly hit around 70% of the executive order’s goals in those first 365 days. At the time, OpenDataPhilly had not yet created an open data working group for engagement or a data governance advisory board, but we cheered on the creation of a portal and the hiring of the City’s first chief data officer.

OpenDataPhilly has progressed in all kinds of ways in the past six years. Here’s how some of the initial executive order goals hold up:

1.) City datasets should be published and made available via an Open Data Portal.

The portal currently has 361 datasets available — one for almost every day of the year! — and 225 of those come from the City of Philadelphia itself. There have been more at times, Carolan said, but her team has consolidated some. Metadata is also shared for each City dataset to add further clarity for the user about what information it contains, which department produced it, who to reach out to for more information and the like. (See the metadata for the Neighborhood Energy Centers dataset here as an example.)

Carolan’s office also publishes blog posts and data visualizations about the datasets to “build out the transparency and accessibility of data,” Carolan said. “I think that’s a lot more than what people [originally expected] from open data.”

2.) This will require the dedication of a new position: Chief Data Officer.

In August 2012, the City of Philadelphia enlisted Mark Headd as its first CDO. After the most immediate past CDO/”hacker in black” Tim Wisniewski stepped down this past November, Geographic Information Officer Henry “Hank” Garie added the CDO role to his workday earlier this month.

3.) The Mayor and the Chief Innovation Officer will establish an Open Data Working Group, *and* 5.) Within 120 days from the Effective Date of this Order, the Mayor shall appoint a Data Governance Advisory Board.

A few working groups have existed over the past few years. Chief Information Officer Mark Wheeler told us he was a part of an advisory board from 2014 to 2016, but it’s since fizzled out. However, there is an active Google discussion group for anyone who wants to chat about open data and government transparency.

Carolan wasn’t heading open data work at the time, but guessed that such a group would have been helpful at the outset to ensure stakeholder engagement. Now, though, the City knows its datasets are being accessed, and it regularly receives data support tickets as feedback. It also invested in community outreach efforts such as use trainings and hackathons.

9.) Each department must create a catalog of its open information.

Users can search the portal by organization, but Carolan said she’s noticed that “most folks are looking for topic-based info, not necessarily a department.”

10.) The data must be in an “open format.”

Open format means the data must be accessible and machine readable so users don’t need to do much cleaning or to use their own apps to read it. It also means the datasets are downloadable in multiple formats — CSV, SHP, etc. — and includes context to help users understand what the data means.

As the team grows its capacity to build datasets, Carolan said, there’s also less of a need to manually update them. That means new information can be added to them faster, allowing users to see more accurate, real-time data. That’s all part of making City data more accessible.

###

Since 2012, Wheeler believes there’s been a huge evolution with how the City uses and shares the data. At the time, he said, the City hadn’t thought much about how a single dataset could be of use to multiple departments, or how one issue could appear in multiple sets. Now, it wants to make sure the data is being properly used by City officials and the public and that there’s increased communication between both.

Wheeler said the department is now less interested in finding more data and more inclined to make better use of the models they have. Similarly, Garie said the City is “thinking more about how those datasets can interact or come together to solve problems.”

“We’re not in the place where we’re trying to uncover a lot anymore,” Wheeler said. “We’re trying to understand the models we have.”

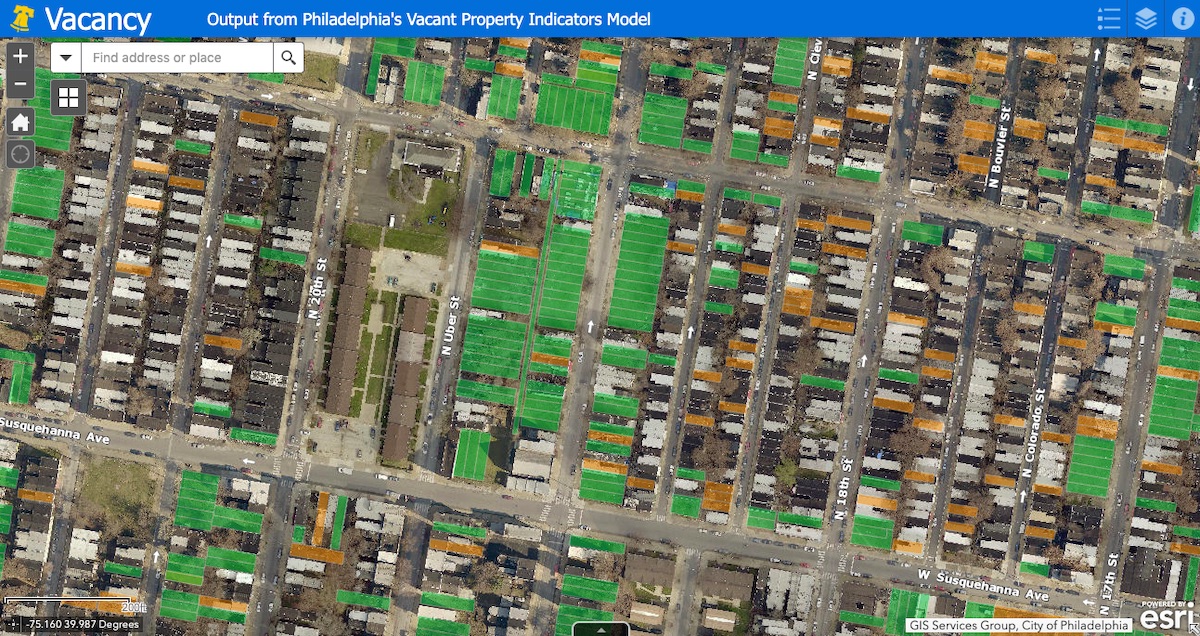

The City’s Vacant Property Indicators Model, for instance, indicates the shift from simply publishing data to using it to identifying where vacancies might be, Carolan said.

“None of the datasets that feed into it were initially designed to predict or provide a confidence level on the locations of building vacancy, but we were able to repurpose many of the open datasets to feed into this data model to do so,” she said.

The ongoing goal — beyond continuously releasing more City data to the public — according to Garie: “As we identify problems internally the City needs to address, we can also rely on data to make decisions.”

Join our growing Slack community

Join 5,000 tech professionals and entrepreneurs in our community Slack today!

Donate to the Journalism Fund

Your support powers our independent journalism. Unlike most business-media outlets, we don’t have a paywall. Instead, we count on your personal and organizational contributions.

National AI safety group and CHIPS for America at risk with latest Trump administration firings

Immigration-focused AI chatbot wins $2,500 from Temple University to go from idea to action

The good news hiding in Philly’s 2024 venture capital slowdown