Chase Reid is a programmer and a coder. Chase Reid is also just a junior in high school.

The Tatnall School student recently competed in Rowan University’s ProfHacks 2017 Hackathon, That’s where he debuted Lyre, an app he developed in only 24 hours. While the program didn’t place in the competition, Reid was up against college undergrad and graduate students. Given his age, the Delaware native made a name for himself.

We talked with Chase about his work on Lyre, his interest in coding, and his new big plans.

###

What got you interested in coding and design?

At the age of nine I received my first iPod Touch and within 24 hours I discovered how to jailbreak — but I wanted more. I wanted to learn how jailbreaks worked, what was underneath the surface of the touch screen device that had the world in its grasp. That’s when I first dove into code. I began exposing myself to Objective-C (a steep learning curve for a beginner) and C++.

By the time I was 11, my interest had shifted to web development, where I learned the basics — HTML and CSS — then PHP and Ruby on Rails, both of which I worked with until I was 15. I made the jump to Python around that time and have been creating most of my apps and works in Python [since].

What’s the last piece of software you coded?

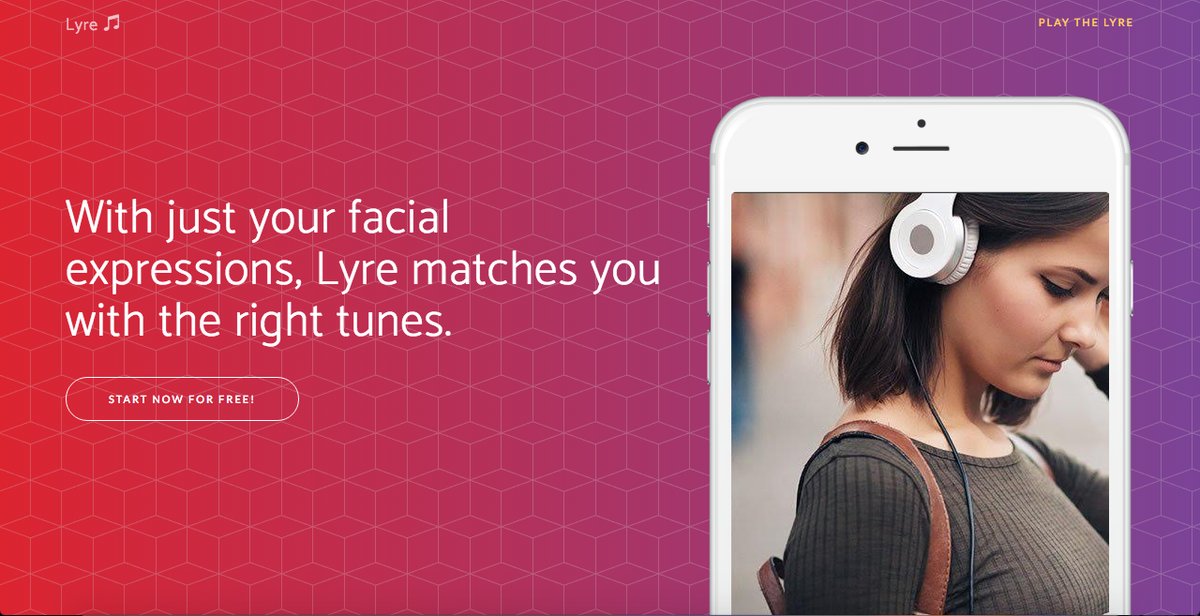

My latest work was a project I built during the ProfHacks 2017 Hackathon at Rowan University. I attempted to be clever by naming it Lyre, the musical instrument of Apollo. In its most simple form, Lyre scans your face for emotions and recommends songs for you based on said emotion.

What about in its not-so-simple form?

What goes on behind the scenes is quite interesting. A deep, convolutional neural network, trained on a database of faces and emotions, returns probabilities that coincide with various emotions. Lyre takes the highest probability and then, in conjunction with the Spotify API, returns music specific to that mood.

The music genres were pre-selected based on the results of a study that shows how different types of music affect certain emotions. My [partner] and I had 24 hours to build it.

How’d you get started on this project?

Getting started is always the hard part.

When I first arrived at the Hackathon we were absolutely dumbfounded as to what we were going to work on. After returning from the opening ceremonies and settling into our workstation, influenced heavily by the music playing in the background, we both decided we should make something that incorporates music. I then began writing the convolutional neural network in Python.

It was a bit difficult finding a freely accessible database with enough images to train the network on. However, after numerous searches, I found the Cohn-Kanade AU-Coded Facial Expression Database. I let the network train itself and after 200 epochs it converged with a validation accuracy of 81 percent.

From there, the task was implementing the weights of the neural network into a Flask web application written in Python, and then bringing it all together with the Spotify API — which was successfully done with three hours of the competition left to spare!

What programming language is Lyre written in?

The entirety of the project was written in Python, from the neural network that learned to detect facial emotions to the backend that served the web app. The front end was developed with JavaScript for the webcam interface, AJAX, HTML, and CSS.

What did you learn from Lyre?

I greatly improved upon my skills with Python, interacting with APIs, and implementing neural networks into live projects. Previously, all of my work with neural networks had been self-devoted research without actual application. Additionally, all of my interaction with APIs had been via wrappers. This project allowed me to break my boundaries, try something new, and become more adept at a language I’ve been working with for a few years now.

Any new ideas coming down the line?

Yes! Something that we started back in July of 2016 has now become our preeminent interest. I’d say we’re about a month out from its release and it’s totally awesome. It’s called Creme. It’ll be available for all platforms.

Can you tell us more about Creme?

We joke around that it’s our crusade to transform the hookup culture that pervades our society, yet it’s become less of a joke and more of a reality. The beauty of face-to-face interaction has been seemingly lost. That’s where Creme shines.

And the best part — it’s all centered around the quaint and personality-filled coffee shop in town that we all love to go to. We truly believe that your 20s is a time for growth and developing lifelong connections, and the hookups can’t do that. There has to be another way, another avenue. We’re gunning to be that avenue.

Join the conversation!

Find news, events, jobs and people who share your interests on Technical.ly's open community Slack

Delaware daily roundup: 20+ things to do in May; Technical.ly's Dev Conference; Dupont earnings

Delaware daily roundup: DE innovation leaders; High schoolers win STEM competition; New Ladybug Fest location

Delaware daily roundup: Greentech digital glowups; AI versus dev jobs; New Biggs Museum website