We’ve heard the story time and time again: A company leader, a teacher or maybe an athlete comes under fire after an insensitive tweet or Facebook post from five years ago surfaces.

It was a concern that Thomas Colaiezzi had as an executive in the fitness industry, where he’d regularly hire young people to staff gyms or front desk positions.

“We were running into problems with their social media posts, and realized if we’d looked into them, we could have avoided some problems,” he said.

And after looking for some services that could help with the process, and talking to some professionals in the human resources and legal fields, he realized that social media monitoring was a pretty untapped market. So in 2019, he launched LifeBrand, which uses AI and machine learning technology to scrub the platforms of a social media user for potentially “harmful” posts. That could mean anything from curse words to racist language to bashing a competing company online.

The startup offers B2B as well as B2C services — an individual can also connect their social media platforms and run an audit on their own to see how many total potentially harmful posts they have shared over the years. The audit is free, and the service will show a couple of posts, but to see the full scope and delete them in real-time, the service costs $14.97.

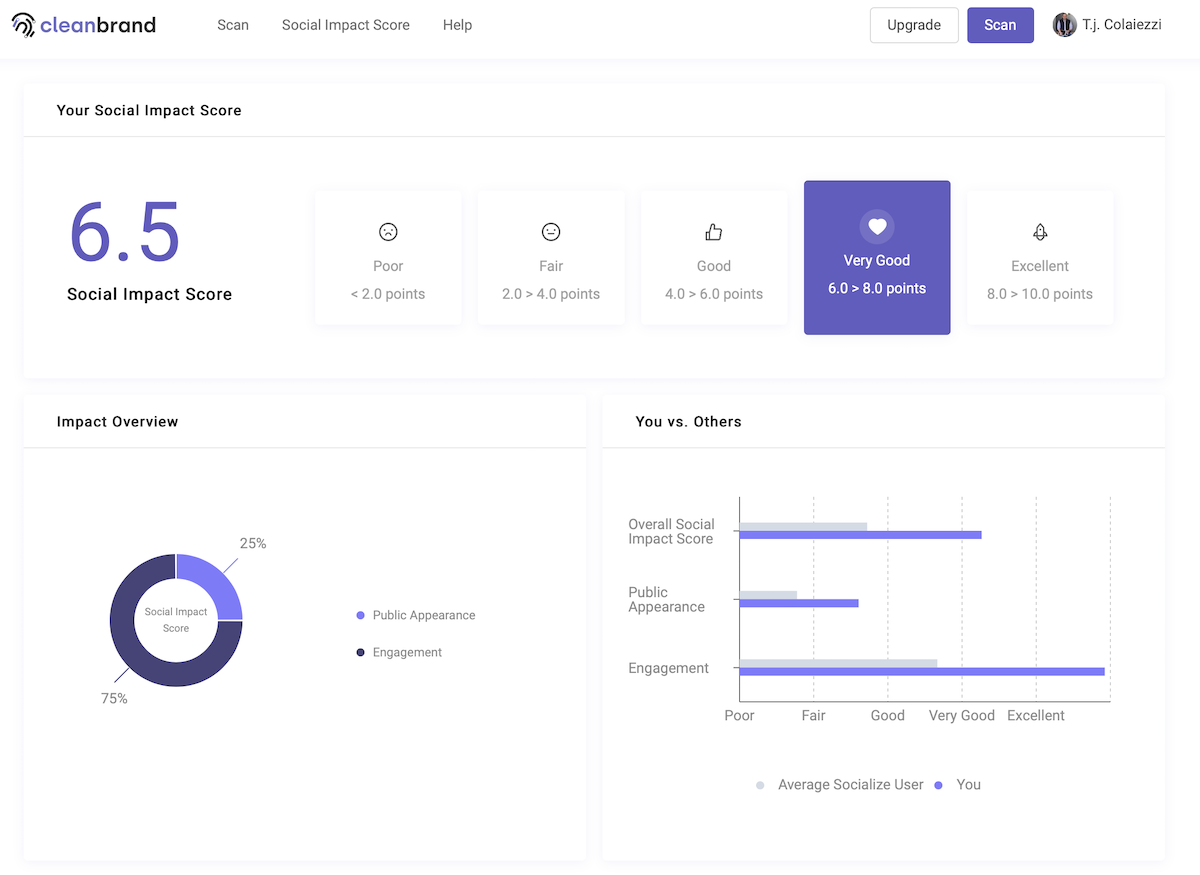

LifeBrand’s platform. (Courtesy image)

Companies that use the service will often do so for potential candidates, Colaiezzi said. It can essentially replace a hiring manager’s task of scoping out a candidates’ online presence and save a lot of time.

“Our technology is more efficient than manually doing it, but also eliminates the potential for human bias during the process,” the founder said.

Folks who do go through the screening must be alerted, Colaiezzi said. But there’s a wealth of ways it could be applied.

If you’re curious, like me, about what might be flagged, you try it out for free. Between my 11 years on Facebook and nine years on Twitter, the service found 71 potentially harmful posts, the majority of which were (probably unsurprisingly to my mother) 1. due to curse words or 2. strong language or ideas tweeted as quotes while I was reporting. Or, you know, just tweeting about “House Hunters.”

Really loving watching House Hunters during this time. Currently in the middle of a global pandemic but Wendy and Jacob in Cleveland, Ohio are debating how many fucking bathrooms are necessary and if they should get a colonial vs. a victorian.

— Paige Gross ✨ (@By_paigegross) March 23, 2020

The machine learning technology is not just looking for specific words, but also notes things like tone, Colaiezzi said. Input from medical industry investors has shown the founder that it could be used to scan for medical professionals potentially breaking HIPAA, as well. A company that uses the service can also input terms like their own brand name or a competitor’s to see if potential or current employees are speaking badly about them online.

The LifeBrand team is currently made up of nine full-time employees in its office in Newtown Square (in non-pandemic times, that is). Colaiezzi said he’s hoping to scale up to 25 employees by October, and that’s thanks to some seed money and a hopeful Series A he wants to close by the end of the year.

The founder said that he’s learned that what’s offensive or appropriate in the workplace could be totally up to the individual making the decision to hire, which is why the service can be customized. The company is currently putting together a group of people from different cultural backgrounds and with varying opinions to ensure that the technology understands a scope of what’s “appropriate.”

“You know, the feeling is that people generally understand what is or isn’t OK to put out there online,” Colaiezzi said. “But we’re finding out it’s not as ‘common sense’ as we might have thought.”

Before you go...

Please consider supporting Technical.ly to keep our independent journalism strong. Unlike most business-focused media outlets, we don’t have a paywall. Instead, we count on your personal and organizational support.

3 ways to support our work:- Contribute to the Journalism Fund. Charitable giving ensures our information remains free and accessible for residents to discover workforce programs and entrepreneurship pathways. This includes philanthropic grants and individual tax-deductible donations from readers like you.

- Use our Preferred Partners. Our directory of vetted providers offers high-quality recommendations for services our readers need, and each referral supports our journalism.

- Use our services. If you need entrepreneurs and tech leaders to buy your services, are seeking technologists to hire or want more professionals to know about your ecosystem, Technical.ly has the biggest and most engaged audience in the mid-Atlantic. We help companies tell their stories and answer big questions to meet and serve our community.

Join our growing Slack community

Join 5,000 tech professionals and entrepreneurs in our community Slack today!

The person charged in the UnitedHealthcare CEO shooting had a ton of tech connections

From rejection to innovation: How I built a tool to beat AI hiring algorithms at their own game

Where are the country’s most vibrant tech and startup communities?