While a mathematical model might seem totally fair and objective, the algorithms that make up much of the artificial intelligence we use every day often have built-in bias.

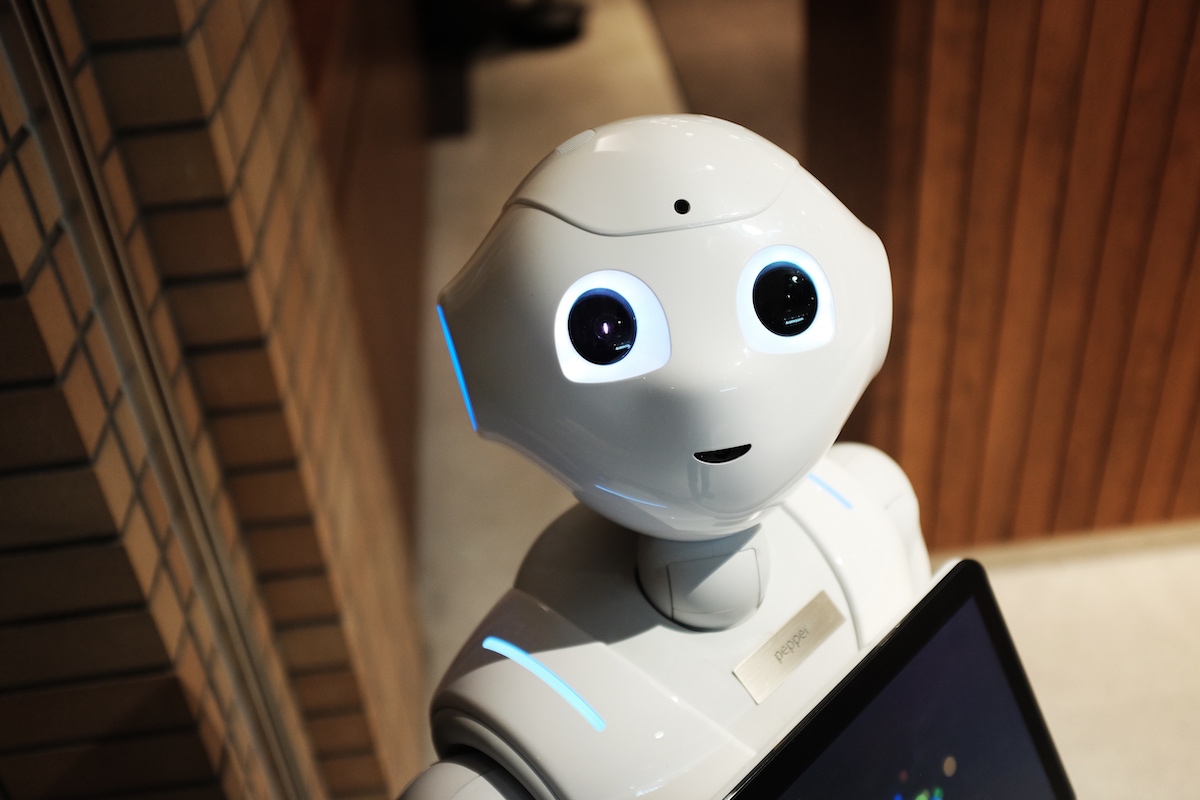

From tools to help judges divert low-risk individuals from prison to self-driving cars, technology that was built to make our lives easier is susceptible to prejudice or influence. And as AI becomes more commonplace in consumer-based technology that we use every day (hello, iPhone face recognition) it’s important to consider how technologists can prevent bias in the decision-making process.

This was the topic of Philly Tech Week 2021 presented by Comcast conversation “Fighting Bias in AI” by Drexel University‘s College of Computing & Informatics (CCI) and CCI’s Diversity, Equity & Inclusion Council on Tuesday. The group kicked off the session with a classic case of AI bias with MIT Ph.D. candidate Joy Buolamwini, a Black woman, who built a tool designed to help her see herself in images of people that inspired her. But the AI in the smart mirror was rendering the tool ineffective — until she tried on a white mask. (Check out more from her story in the “Coded Bias” documentary currently on Netflix.)

“She went through a lot of public-facing software, there were a few other software systems that failed in similar ways,” said Edward Kim, an associate professor of computer science. “There are failures up to 35% in face detection algorithms on darker-skinned women, essentially attributed to data sets that were trained on primarily male and white examples.”

But there are steps technologists can take to correct this, said Logan Wilt, chief data scientist of applied AI at the Center of Excellence at DXC Technology. And it often starts with your data.

One of the first things you need to do is access and clean your raw data set — because “data by in large is never clean,” she said. “It usually has to be prepped for AI.” You also have to visualize and explore your raw data: Visualize what’s missing and explore the features you’ll be using to built your predictive tools. If race or gender is an identifier of your tool, yet your data set doesn’t match the realistic population, bias will happen.

That rolls into her third step: Build data pipelines. Plan for how you’ll keep your data pool up to date and expanding. Some great projects never make it into production because they’re lacking a data pipeline, Wilt said. Plan for this step early and often in setting up your algorithm.

The math could also be perfect, but still, there will be times your system will encounter something it wasn’t built to do, Kim said. For example, Tesla tests its self-driving cars for a variety of incidents, but if a piano suddenly appears in the middle of the road, it still might not know what to do.

But just as if not more important than the data and the math itself is the folks behind the product, Wilt said. The conscious or unconscious biases of those building a product will ultimately shape how it works, like in Buolamwini’s case.

“It’s a math problem, a data problem and a human problem,” Kim agreed.

Calls for diverse teams in tech isn’t new, but the problem still persists. In 2019, Asia Rawls, director of software and education for the Chicago-based intelligent software company Reveal called out at the HUE Tech Summit that only 2% of technologists in Silicon Valley are Black.

And Wilt closed out with why that’s ultimately very important:

“When you’re building AI for models that will have an impact on people and their lives, then the team that you select to build those models are who are going to be aware of the modern world,” she said. “The data might be selective of the history.”

This editorial article is a part of Tech for the Common Good Month of Technical.ly's editorial calendar. This month’s theme is underwritten by Verizon 5G. This story was independently reported and not reviewed by Verizon before publication.

Before you go...

Please consider supporting Technical.ly to keep our independent journalism strong. Unlike most business-focused media outlets, we don’t have a paywall. Instead, we count on your personal and organizational support.

Join our growing Slack community

Join 5,000 tech professionals and entrepreneurs in our community Slack today!

The person charged in the UnitedHealthcare CEO shooting had a ton of tech connections

From rejection to innovation: How I built a tool to beat AI hiring algorithms at their own game

Where are the country’s most vibrant tech and startup communities?